asynchronous_io_with_boost_asio

Asynchronous IO with Boost.Asio - Michael Caisse

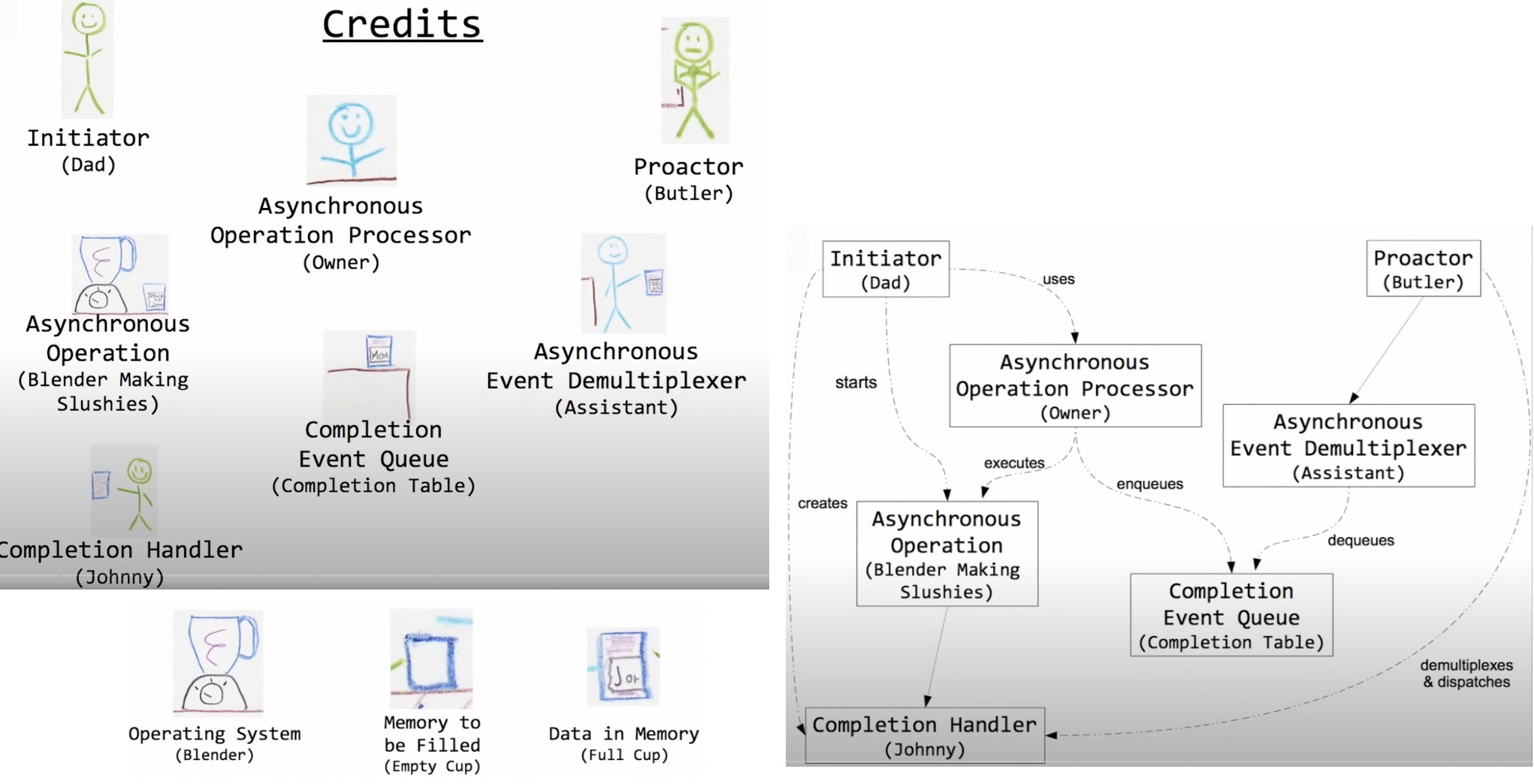

Story of proactor model

-

Scenario: a beach day story involving a family, butlers, and slushie orders.

- A family goes to the beach; the father (initiator) asks the slushie shack owner (asynchronous operation processor) to prepare slushies, instructing them to be delivered to his son (completion handler) by the butler (proactor).

- The owner starts making the slushie (asynchronous operation) with the help of a blender (operating system), and once done, it's moved to a completion table (completion event queue) by an assistant (asynchronous event demultiplexer).

- The butler, upon receiving the slushie from the assistant, delivers it to the son.

- The process reflects the handling of multiple requests and the coordination necessary to manage asynchronous operations efficiently.

-

Roles Mapped to Proactor Model Components:

- Initiator: Dad

- Asynchronous Operation Processor: Owner

- Proactor: Butler

- Asynchronous Event Demultiplexer: Assistant

- Completion Handler: Johnny (the son)

- Asynchronous Operations: Blender making the slushie

- Completion Event Queue: Completion table

- Additional roles include the operating system (blender) and memory concepts represented by the empty and full cups.

-

Additional Insights:

- The story also touches on scenarios like over-demand (too many butlers), inefficiencies (no butlers leading to a cluttered completion table), and edge cases (children leaving or people canceling orders).

- It highlights the importance of managing asynchronous tasks and the flow of operations to maintain efficiency and prevent system overload.

-

Purpose:

- To offer a mental model for understanding the proactor pattern, making it easier to grasp the functionality and importance of each component in asynchronous operations.

- The narrative demonstrates real-world dynamics of asynchronous processing, emphasizing the need for a structured approach to handling concurrent operations and events.

From the story illustrating the proactor model, several practical lessons can be drawn and applied to better understand and utilize this asynchronous pattern.

-

Activity Confinement:

- All activities within the proactor model, analogous to operations within the slushy shack, are contained. This containment ensures that asynchronous operations are managed efficiently within their designated environment.

-

Result Delivery:

- The butler (handler thread) is responsible for delivering the outcome of asynchronous operations (slushies) to the completion handler (Johnny). The application (represented by the family) provides this handler thread, emphasizing the controlled flow of data and results.

-

Resource Ownership:

- The application supplies and owns the resources, like the cup (memory), used in asynchronous operations. This ownership highlights the importance of managing resources effectively within the application's control.

-

Reactor vs. Proactor:

- A key distinction between the reactor and proactor patterns is how they handle multiple results or requests. Some completion handlers (Johnny) may not handle multiple results well, similar to code that isn't re-entrant. Others (Susie) can handle multiple asynchronous events simultaneously without issue.

-

Maintaining Scope:

- It's crucial not to exit the scope or context (leave the beach) while an asynchronous operation is in progress for you. This ensures that the completion handler remains available to receive the operation's outcome.

-

Efficient Handling:

- A limited number of handler threads (butlers) can manage multiple completion routines effectively. This efficiency reduces the need for excessive threads and resources, optimizing the system's performance.

Asio basic functionalities

void snippet1() {

asio::io_service service;

// The deadline timer is passed the IOService as its first argument. And then

// it's provided with, in this case, the relative time that, once it's

// activated, how long it will take until the timer expires.

asio::deadline_timer timer(service, posix_time::seconds(5));

// call timer.async_wait. And at that point, that's when the timer is going to

// begin. The async_wait is going to be passed a completion handler. This

// completion handler is going to do nothing except print out what time it is

// and that the timer expired.

timer.async_wait([](auto... vn) {

std::cout << util::get_time_now_as_string() << " : timer expired.\n";

});

std::cout << util::get_time_now_as_string() << " : calling run\n";

// The `service.run()` method is called, which begins processing asynchronous

// events. This includes waiting for the timer to expire. The role of

// `service.run()` is analogous to the butler in the provided story, handling

// the completion events as they occur. So each time I call run on each of

// those threads, that is another thread that can handle completions for me.

service.run();

// OPverall, what's going on when we call async_wait? That request has come

// in. It's sitting there, which is just a timer. It's sitting there waiting

// to be done. Once it's done, it is placed on the completion queue. The next

// available thread will pick that up and deliver it to the handler that wants

// it, the completion handler.

std::cout << util::get_time_now_as_string() << " : done.\n";

}void timer_expired(std::string id) {

// Timer expired does nothing except for print out that we've entered timer

// expired. It waits for three seconds and then it prints that it's exiting.

// So we start these things off. They should each go off basically about the

// same time. So they're supposed to go off at five seconds. So each of them

// will produce the completion.

std::cout << util::get_time_now_as_string() << " " << id << " enter.\n";

std::this_thread::sleep_for(std::chrono::seconds(3));

std::cout << util::get_time_now_as_string() << " " << id << " leave.\n";

}

void snippet2() {

asio::io_service service;

// create two different timers. Both are going to be five seconds. We're going

// to start an async wait on each of them. And we will have one thread that

// we're going to start off that is going to call run on the IO service.

asio::deadline_timer timer1(service, posix_time::seconds(5));

asio::deadline_timer timer2(service, posix_time::seconds(5));

timer1.async_wait([](auto... vn) { timer_expired("timer1"); });

timer2.async_wait([](auto... vn) { timer_expired("timer2"); });

// 2 timer_expired() 'll be stuck on the completion queue. There's only one

// thread. So the thread will have to handle one at a time, whichever one ends

// up in the queue first will be handled. And then the next one. There's

// something else in the completion queue that's able to pick up and then take

// care of that handler. This is that idea of the butler can only deliver one

// slushy at a time.

// It looks like service.run is expecting that there is work to do, is the

// basic comment. And yes, it's looking that there is work to do. How does it

// know when it's all done? When there's nothing left inside of, you can think

// of it as a slushy shack. When there's nothing left inside of the proactor

// to do, there's no more work inside of the to-be-done work, there's nothing

// inside of the completion queue, then the service.run() will return.

std::thread butler([&]() { service.run(); });

butler.join();

std::cout << "done." << std::endl;

}void snippet3() {

// This used to show intervened result because 2 separate threads are running

// the service.run(), but for newer boost (>= ?), it's fine.

asio::io_service service;

asio::deadline_timer timer1(service, posix_time::seconds(5));

asio::deadline_timer timer2(service, posix_time::seconds(5));

timer1.async_wait([](auto... vn) { timer_expired("timer1"); });

timer2.async_wait([](auto... vn) { timer_expired("timer2"); });

std::thread ta([&]() { service.run(); });

std::thread tb([&]() { service.run(); });

ta.join();

tb.join();

std::cout << "done." << std::endl;

}void snippet4() {

asio::io_service service;

// We can just say, this is equivalent of the owner just placing items

// directly, there's nothing to be done. There's no IO to fetch, there's no

// timer, there's nothing associated with it. We're just going to take a

// handler and we're going to stick it on the completion queue for the next

// thread to pick up. We are going to do this by using post.

service.post([] { std::cout << "eat\n"; });

service.post([] { std::cout << "drink\n"; });

service.post([] { std::cout << "and be merry!\n"; });

std::thread butler([&] { service.run(); });

butler.join();

// So this in essence is a thread queue for us, In fact, a very common pattern

// when you're writing code using the asynchronous IO library is you will have

// a layer that is your communication layer and it has an IO service. And then

// you have another IO service that will be taking care of all the heavy

// lifting processing and the two layers do nothing more than pass off work

// between one another. So as communication comes in, you can delegate the

// number of threads you might need for your communications and then you pass

// that off to another IO service and those items get posted and you now have

// the work queue in which you can provide as many threads as you need to

// actually perform the work that you want to get done.

std::cout << "done." << std::endl;

}void snippet5() {

// improved of snippet3

// And so the code now has changed slightly. We've created, out of the IO

// service has this thing called a strand.

// We've taken and created a strand object. We pass it the service. These are

// the key bits here that we've added. So we've got a strand, and now instead

// of just calling async wait and giving it the completion handler, what I

// want you to run when that happens, I actually take the strand and I wrap my

// completion handler. The strand, will ensure that there is only one

// completion handler that is wrapped by the same strand will run at the same

// time. And that will take care of any of the threading issues that I might

// have. So I might have lots of threads, and I might have

// lots of things on the completion queue, items on the completion queue that

// need to be called, but those handlers will not get called if they are

// wrapped by the same strand.

asio::io_context service;

auto strand = asio::make_strand(service.get_executor()); // !!!

asio::deadline_timer timer1(service, posix_time::seconds(5));

asio::deadline_timer timer2(service, posix_time::seconds(5));

timer1.async_wait(asio::bind_executor(

strand, [](auto... vn) { timer_expired("timer1"); })); // !!!

timer2.async_wait(asio::bind_executor(

strand, [](auto... vn) { timer_expired("timer2"); })); // !!!

std::thread ta([&]() { service.run(); });

std::thread tb([&]() { service.run(); });

ta.join();

tb.join();

std::cout << "done.\n";

}void snippet6() {

// Let's add a third timer for six seconds. And when we start its async_wait,

// we will not wrap it inside the strand. So timer number one and timer number

// two are five seconds. They're wrapped in the same strand. They're gonna go

// off at the same time. Timer number three is six seconds. It's not wrapped

// inside the strand. And so what would we expect to see? Well, we expect to

// see that one and two are serialized in whichever order. Order is

// non-deterministic. So whichever order it occurs first inside the completion

// queue, and they get picked up. And we would expect to see, because there's

// another thread hanging out, that timer number three will go ahead and be

// invoked. And we do see that. So timer one goes off. And then a second

// later, we can see that timer three will be entered. Timer one finally

// leaves, and timer two can begin. And then our timer three is finally

// leaving, and then timer two.

asio::io_context service;

auto strand = asio::make_strand(service.get_executor());

asio::deadline_timer timer1(service, posix_time::seconds(5));

asio::deadline_timer timer2(service, posix_time::seconds(5));

asio::deadline_timer timer3(service, posix_time::seconds(6));

timer1.async_wait(asio::bind_executor(

strand, [](auto... vn) { timer_expired("timer1"); })); // !!!

timer2.async_wait(asio::bind_executor(

strand, [](auto... vn) { timer_expired("timer2"); })); // !!!

timer3.async_wait(

[](auto... vn) { timer_expired("timer3"); }); // not in strand

std::thread ta([&]() { service.run(); });

std::thread tb([&]() { service.run(); });

ta.join();

tb.join();

// So if you have things that need to be serialized, for example, in IO, we

// can't be going around writing to a TCP socket from multiple threads at the

// same time, right? That's a disaster. So writes, we would want to wrap

// inside of a strand. We would want to make sure that the access to that

// writing process is protected.

std::cout << "done.\n";

}Buffers

socket_.send(asio::buffer(data, size));

std::string personal_message("dinner time!");

socket_.send(asio::buffer(personal_message));

std::array<uint_8, 4> code = {0xde, 0xad, 0xbe, 0xef};

socket_.send(asio::buffer(code));-

Buffers as Views:

- Buffers in ASIO act as views into data, akin to the analogy of the cup from the story where the application owns the memory.

- It's essential to ensure the memory backing these buffers isn't deallocated or goes out of scope while ASIO is still using it, to avoid crashes.

-

Types of Buffers:

- Mutable Buffers: Can be modified. They allow ASIO to write data into the memory they view.

- Const Buffers: Read-only. They are used for operations where data is being sent out or read but not modified by ASIO.

-

Conversion: Mutable buffers can be converted into const buffers, accommodating different operation needs while maintaining C++ style and safety.

-

Scatter-Gather I/O:

- This technique involves using multiple buffers for a single I/O operation, akin to a Direct Memory Access (DMA) operation. This can improve efficiency by avoiding the need to copy data into a single contiguous buffer before sending or after receiving.

- ASIO supports scatter-gather I/O, enabling efficient sending or receiving of data distributed across multiple buffers.

std::array<uint_8, 4> head = {0xba, 0xbe, 0xfa, 0xce};

std::string msg("CppCon Rocks!");

std::vector<uint8_t> data(256);

std::vector<asio::const_buffer> bufs{asio::buffer(head), asio::buffer(msg),

asio::buffer(data)};

socket_.send(bufs);Servers

Concept of a Chat Server

- A chat server allows multiple clients to connect and communicate. Messages sent by one client are broadcast to all other connected clients.

- Connections are managed on different ports, with an initial connection possibly being handed off to another port for ongoing communication.

Ownership of Client Handlers

- Client Handler Role: Represents the connection between a client and the server.

- Ownership Challenge: Determining who owns the client handler objects and is responsible for their lifecycle (creation, maintenance, and destruction).

Common Design Approach

- A manager object often assumes ownership, maintaining a list of client handlers, periodically checking their connection status, and cleaning up disconnected handlers.

- This approach, while common, might indicate a design flaw, as it offloads the responsibility of lifecycle management to a separate entity that constantly checks resource validity.

Ownership and Lifecycle Management Insights

- Ideal Ownership Model: Client handlers should manage their own lifecycle, existing as long as they have work to do (sending/receiving data) or until the client disconnects.

- Self-Maintenance: A client handler knows best when it no longer has a connection or work to do, including managing internal buffers and completion handlers.

Implementation Strategy

- Self-Sustaining Design: The mechanism for keeping a client handler alive should rely on the handler itself performing necessary checks and tasks.

- Use of Completion Handlers: Through completion handlers in asynchronous operations (like reading from a buffer), a client handler can decide whether to initiate further reads based on the presence of errors or the completion of its tasks.

template <typename ConnectionHandler>

class asio_generic_server {

using shared_handler_t = std::shared_ptr<ConnectionHandler>;

public:

asio_generic_server(int thread_count = 1)

: thread_count_(thread_count), acceptor_(io_service_) {}

void start_server(uint16_t port) {

auto handler = std::make_shared<ConnectionHandler>(io_service_);

// set up the acceptor to listen on the tcp port

asio::ip::tcp::endpoint endpoint(asio::ip::tcp::v4(), port);

acceptor_.open(endpoint.protocol());

acceptor_.set_option(tcp::acceptor::reuse_address(true));

acceptor_.bind(endpoint);

acceptor_.listen();

acceptor_.async_accept(handler->socket(), [=](auto ec) {

handle_new_connection(handler, ec);

});

// start pool of threads to process the asio events

for (int i = 0; i < thread_count_; ++i) {

thread_pool_.emplace_back([=] { io_service_.run(); });

}

}

private:

void handle_new_connection(shared_handler_t handler,

system::error_code const& error) {

if (error) {

return;

}

handler->start();

auto new_handler = std::make_shared<ConnectionHandler>(io_service_);

acceptor_.async_accept(new_handler->socket(), [=](auto ec) {

handle_new_connection(new_handler, ec);

});

}

int thread_count_;

std::vector<std::thread> thread_pool_;

asio::io_service io_service_;

asio::ip::tcp::acceptor acceptor_;

};

Key Components and Their Roles

-

shared_handler_tType Alias: Defines astd::shared_ptr<ConnectionHandler>for managing connection handlers with shared ownership. -

Constructor and Initialization:

- Accepts an

int thread_countto specify the number of threads in the thread pool. - Initializes an ASIO acceptor to manage incoming connections.

- Accepts an

Server Startup Process

-

Creating a Handler for New Connections:

- A shared pointer to a

ConnectionHandleris created and passed theio_servicefor asynchronous operations.

- A shared pointer to a

-

Setting Up the Acceptor:

- Configures the acceptor to listen on a specified TCP port, setting options like

reuse_addressand binding to the endpoint.

- Configures the acceptor to listen on a specified TCP port, setting options like

-

Accepting Connections Asynchronously:

- Calls

acceptor_.async_acceptto asynchronously accept incoming connections, passing the handler's socket and a lambda function as the completion handler. This lambda callshandle_new_connectionupon accepting a connection.

- Calls

-

Thread Pool Creation:

- Initializes a pool of threads (based on

thread_count_), each executingio_service_.run()to process ASIO events.

- Initializes a pool of threads (based on

Handling New Connections

handle_new_connectionMethod:- Checks for errors in the incoming connection. If no errors, it proceeds; otherwise, it returns immediately, allowing resources to be released.

- Calls

handler->start()to initiate handling of the new connection. - Prepares for the next connection by creating a new

ConnectionHandlerinstance and setting up another asynchronous accept call with it.

Design Considerations

- Shared Ownership of Handlers: Using

std::shared_ptrfor connection handlers ensures that their lifetime is automatically managed and tied to both the server's operation and ongoing asynchronous tasks. - Asynchronous Accept Loop: Continuously prepares a new handler for incoming connections, ensuring the server can accept multiple connections over its lifetime without blocking.

- Error Handling: The check for errors before proceeding with a connection ensures that only valid connections are processed.

- Thread Pool for Concurrency: Utilizes a configurable number of threads to handle I/O operations concurrently, improving the server's ability to scale with multiple simultaneous connections.

No Need for std::ref with io_service

- The

io_serviceobject is passed by reference to connection handlers, eliminating the need forstd::refin this context. This is because the server and handlers share theio_service, allowing for efficient asynchronous operations without unnecessary copying.

ClientHandler to pass to asio_generic_server

class chat_handler : public std::enable_shared_from_this<chat_handler> {

public:

chat_handler(asio::io_service& service)

: service_(service), socket_(service), write_strand_(service) {}

boost::asio::ip::tcp::socket& socket() { return socket_; }

void start() { read_packet(); }

private:

asio::io_service& service_;

asio::ip::tcp::socket socket_;

asio::io_service::strand write_strand_;

asio::streambuf in_packet_;

std::deque<std::string> send_packet_queue;

void read_packet() {

asio::async_read_until(

socket_, in_packet_, '\0',

[me = shared_from_this()](system::error_code const& ec,

std::size_t bytes_xfer) {

me->read_packet_done(ec, bytes_xfer);

});

}

void read_packet_done(system::error_code const& error,

std::size_t bytes_transferred) {

if (error) {

return;

}

std::istream stream(&in_packet_);

std::string packet_string;

stream >> packet_string;

// do something with it

read_packet();

}

//-----------------------------send related logic-----------------------------

public:

void send(std::string msg) {

service_.post(write_strand_.wrap(

[me = shared_from_this()]() { me->queue_message(msg); }));

}

private:

void queue_message(std::string message) {

bool write_in_progress = !send_packet_queue.empty();

send_packet_queue.push_back(std::move(message));

if (!write_in_progress) {

start_packet_send();

}

}

void start_packet_send() {

send_packet_queue.front() += "\0";

async_write(

socket_, asio::buffer(send_packet_queue.front()),

write_strand_.wrap([me = shared_from_this()](

system::error_code const& ec, std::size_t) {

me->packet_send_done(ec);

}));

}

void packet_send_done(system::error_code const& error) {

if (!error) {

send_packet_queue.pop_front();

if (!send_packet_queue.empty()) {

start_packet_send();

}

}

}

};chat_handler Class Overview

- Inherits from

std::enable_shared_from_thisto allow instances to manage their own lifetimes by creating shared pointers tothis. - Manages socket communications and asynchronous reads from clients.

Key Components

- Constructor Parameters: Receives an

asio::io_servicereference to perform asynchronous operations. - Socket Management: Utilizes an

asio::ip::tcp::socketfor client communications. - Write Operations Synchronization: Uses an

asio::io_service::strandto ensure write operations are executed without race conditions in a multi-threaded environment. - Incoming Data Handling: Employs an

asio::streambuf(in_packet_) for buffering incoming packet data.

Asynchronous Reading Process

- Initial Read Setup: The

start()method begins the asynchronous read operation by callingread_packet(). - Reading Data:

read_packet()usesasio::async_read_until()to read data intoin_packet_until a null terminator is encountered, signifying the end of a packet. - Completion Handler: A lambda captures a shared pointer to

this(me) and is passed as a completion handler toasync_read_until(). This mechanism extends the lifetime of the handler instance until the read operation is complete. - Processing Read Data: Upon completion,

read_packet_done()processes the received data and initiates another read operation by callingread_packet()again.

Lifetime Management

- Self-Managing Lifetime: By capturing a shared pointer to

thisin the asynchronous operations' completion handlers,chat_handlerinstances ensure they remain alive as long as necessary (i.e., until there are no more pending asynchronous operations). - Automated Cleanup: Once there are no more references (e.g., all asynchronous operations are completed, and there are no external references), the instance is automatically destroyed.

Design Patterns and Practices

- Curiously Recurring Template Pattern (CRTP):

chat_handlerinherits fromstd::enable_shared_from_this<chat_handler>, a use of CRTP that provides the ability to create shared pointers to the current instance. - Asynchronous and Concurrent Design: The design exemplifies asynchronous programming principles and concurrency management with strands, ensuring safe and efficient handling of multiple client connections.

Use of async_read_until

-

Functionality: Reads data from the socket into a buffer until a specified delimiter or condition is met. This is useful for parsing protocol-specific messages or packets where the end of a message can be clearly identified.

-

Flexibility: Allows the use of custom delimiters or conditions, including regular expressions, to determine the end of a read operation.

Concerns and Recommendations

-

Security Risks: Using

async_read_untilwith unconstrained or complex conditions can introduce security vulnerabilities. For example, an attacker could exploit the lack of memory constraints to cause a buffer overflow or exhaust system resources. -

Production Code Caution: While convenient for prototypes or demonstrations, relying solely on

async_read_untilfor message boundary detection in production code is discouraged due to potential inefficiencies and security concerns. -

Recommended Approach:

- Read What's Available: Instead of waiting for a specific pattern or delimiter, read available data from the socket. This approach reduces the likelihood of buffer overflows and simplifies data handling.

- Implement Constraints: Apply constraints on the amount of data read or the conditions under which data is processed. This can include setting maximum message sizes or validating message integrity before processing.

- Buffer Management: Formulate your own buffering strategy that respects these constraints, ensuring that data processing is both efficient and secure.

Practical Implications

-

Performance and Security: Properly managing read operations and buffer sizes can significantly enhance the application's performance and security posture. It prevents common vulnerabilities associated with unbounded reads and ensures the application can handle varying data rates and sizes.

-

Protocol Design Considerations: When designing a communication protocol or handling incoming data, consider how message boundaries are defined and detected. Opt for clear, simple delimiters or message length prefixes that allow for straightforward and secure parsing.

On the send related logic

Asynchronous Sending Mechanism

- Thread-Safe Writes: Utilizes

asio::io_service::strandto serialize access to the socket for write operations, ensuring thread safety without using explicit locking mechanisms. - Queue Management: Messages to be sent are stored in a

std::deque<std::string>(send_packet_queue), allowing for sequential processing of outgoing messages.

Process Flow for Sending Messages

-

Public

sendMethod: Externally exposed method that takes a message string to be sent to the client.- Posts the message handling task to the ASIO service object wrapped in the write strand, ensuring thread-safe access.

- Captures

shared_from_this()to maintain the object's lifetime until the posted task is executed.

-

Private

queue_messageMethod: Queues the message for sending and starts the send operation if no other send operation is currently in progress.- Checks if the

send_packet_queueis empty to determine if a write operation is already underway.

- Checks if the

-

Private

start_packet_sendMethod: Initiates an asynchronous write operation for the next message in the queue.- Adds a null terminator to the message string for delineation.

- Uses

async_writewith the socket and an ASIO buffer created from the message at the front of the queue.

-

Completion Handler for Write Operations: Defined within the

start_packet_sendmethod via a lambda that callspacket_send_done.- The lambda again captures

shared_from_this(), extending the object's lifetime until the write operation completes.

- The lambda again captures

-

Private

packet_send_doneMethod: Handles the completion of a write operation.- On successful write, removes the sent message from the queue.

- If the queue is not empty, initiates a send operation for the next message.

Error Handling and Lifecycle Management

- Error Checking: The completion handler (

packet_send_done) checks for errors in the write operation. Currently, it "bails" or does nothing on error, but this is where error handling logic would be implemented. - Object Lifetime: The use of

shared_from_this()in capturing lambdas for completion handlers ensures thechat_handlerinstance remains alive as long as asynchronous operations are pending, managing its lifetime effectively.

Layered Design and ASIO Usage

- Layered Design: Advocate for a layered architecture, with distinct separation between communication and processing layers.

- IO Services as Executors: Utilize IO services for executing work, separating communication tasks from processing tasks for efficiency and clarity.

Custom Services and Protocol Handling

- Creating Custom Services: It's straightforward to implement custom services in ASIO for various communication protocols or hardware interfaces.

- ASIO Spirit Client Handler: Example of integrating external libraries (like Boost.Spirit for parsing/generating byte streams) with ASIO for protocol-specific handling.

Concurrency and Async Patterns

- Completion Handlers and Coroutines: Offers flexibility in how to manage asynchronous operations, with options for traditional completion handlers or modern coroutine-based approaches.

- State Machines: Using state machines (e.g., Boost.MSM) alongside ASIO for structured and stateful asynchronous logic.

Testing Strategies

- Stubbing for Testability: Replace real sockets with stubs in testing environments to simulate and verify communication logic.

- Challenges in Testing Communications: Testing asynchronous communication systems requires thoughtful stubbing and mocking to accurately simulate network interactions.

Separation of Concerns in Server Design

- Accepting Connections vs. Processing Work: Discussion on separating the thread handling new connections from those processing client communications, potentially using different IO services.

- Thread Pool Management: Strategies for dedicating specific IO services and thread pools to distinct tasks (e.g., accepting connections vs. handling ongoing communication).

Specialized Use Cases

- File IO: Mention of proposals and ongoing work for asynchronous file IO in ASIO, indicating the evolving nature of ASIO and its ecosystem.

- Beast Library: Highlighting Beast, a library built on top of ASIO for HTTP communications, showcasing the extensibility of ASIO for building higher-level communication services.